Key Takeaways

- LLMs generate answers from pretrained knowledge, making them ideal for creativity, general queries, writing, and code generation.

- RAG enhances LLMs with real-time, external, and private data, improving accuracy, context, and reliability.

- Use LLM for creative and open-ended tasks where exact factual correctness is not critical.

- Use RAG for business-critical applications requiring updated, compliant, and domain-specific information.

- RAG significantly reduces hallucinations by grounding responses in trusted documents.

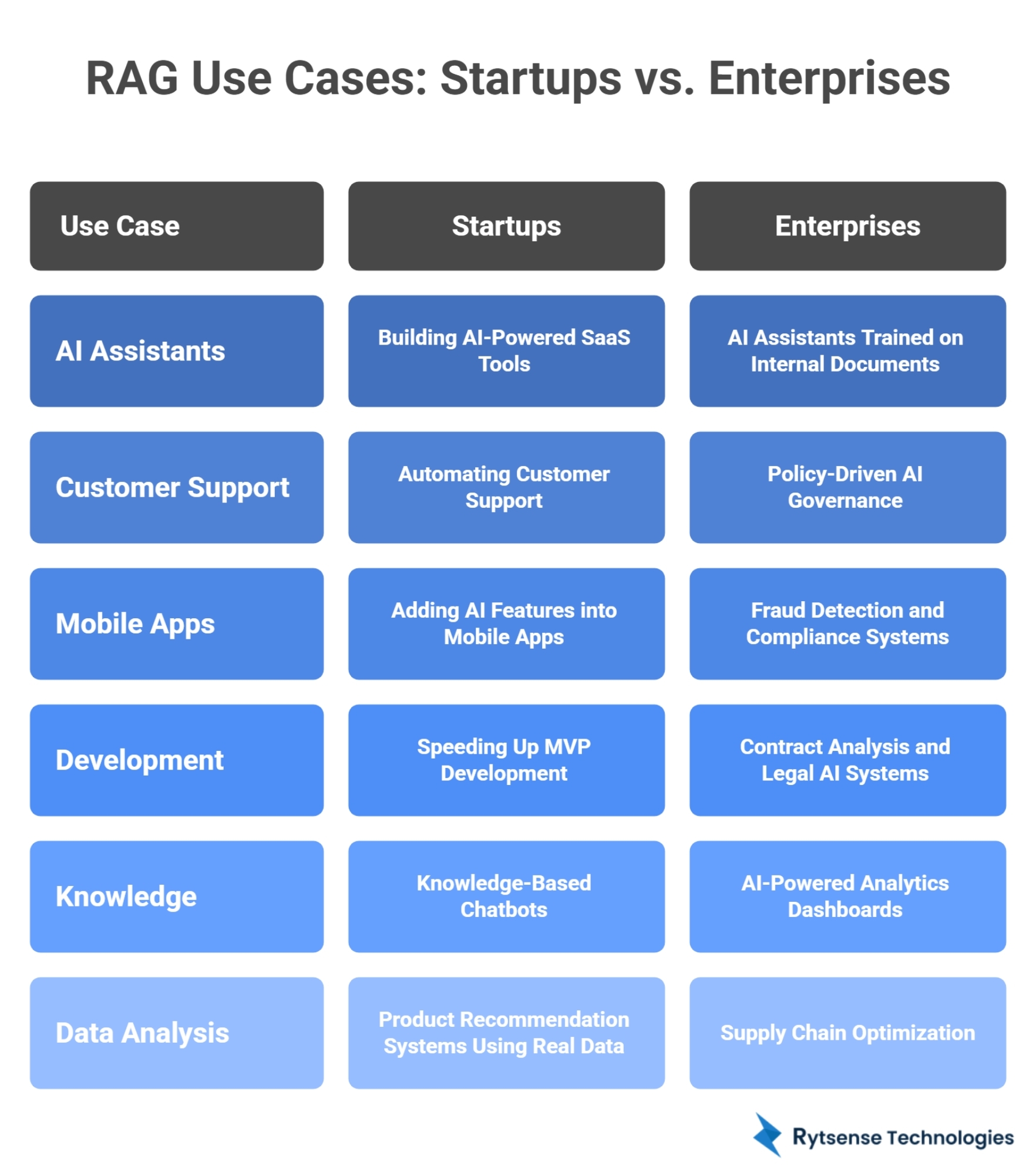

- Startups and enterprises both rely on RAG for AI assistants, customer support, automation, and analytics.

- RAG + LLM together form the new standard for enterprise AI development in 2025 and beyond.

What Is the Difference Between RAG and LLM?

The simplest difference:

- An LLM (Large Language Model) generates answers based on the knowledge it learned during training.

- RAG (Retrieval-Augmented Generation) improves an LLM by giving it fresh, accurate, external information at the moment of answering.

In short:

- ✅ LLM = knowledge from the past.

- ✅ RAG = LLM + real-time, domain-specific knowledge.

This is why modern businesses, AI developers, and enterprises rely on RAG-powered systems when they need accuracy, compliance, and up-to-date AI applications. Now let’s explore these two technologies in a deeper, simpler, and business-focused way.

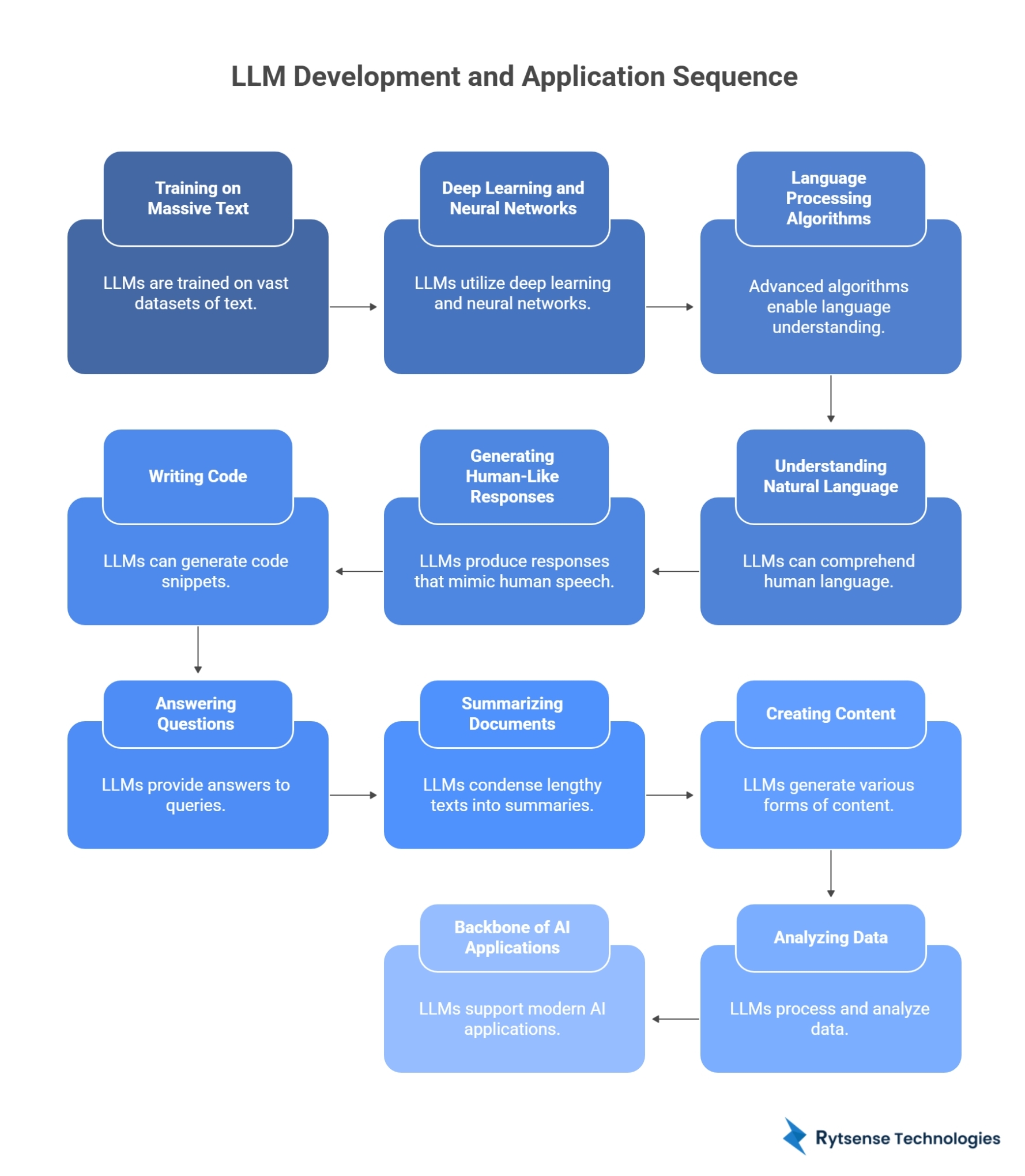

1. What Is an LLM?

A Large Language Model is an advanced AI model trained on massive amounts of text—books, websites, code, research papers, scientific articles, and more. Examples include:

- ChatGPT

- Google Gemini

- Llama

- Claude

- Mistral

An LLM is built on deep learning, neural networks, and advanced language processing algorithms. Once trained, it:

- Understands natural language

- Generates human-like responses

- Writes code

- Answers questions

- Summarizes documents

- Creates content

- Analyzes data

LLMs are the backbone of most modern AI applications,generative AItools, AI chatbots, enterprise AI systems, and intelligent automation platforms.

2. What Is RAG and Why Was It Introduced?

RAG(Retrieval-Augmented Generation) is an AI framework that enhances an LLM by giving it external knowledge in real time.

Why was it invented?

Because LLMs have limitations:

- They cannot access private company data

- They do not know recent events

- Their training data ends at a fixed cutoff

- They may generate hallucinations

- They cannot validate information

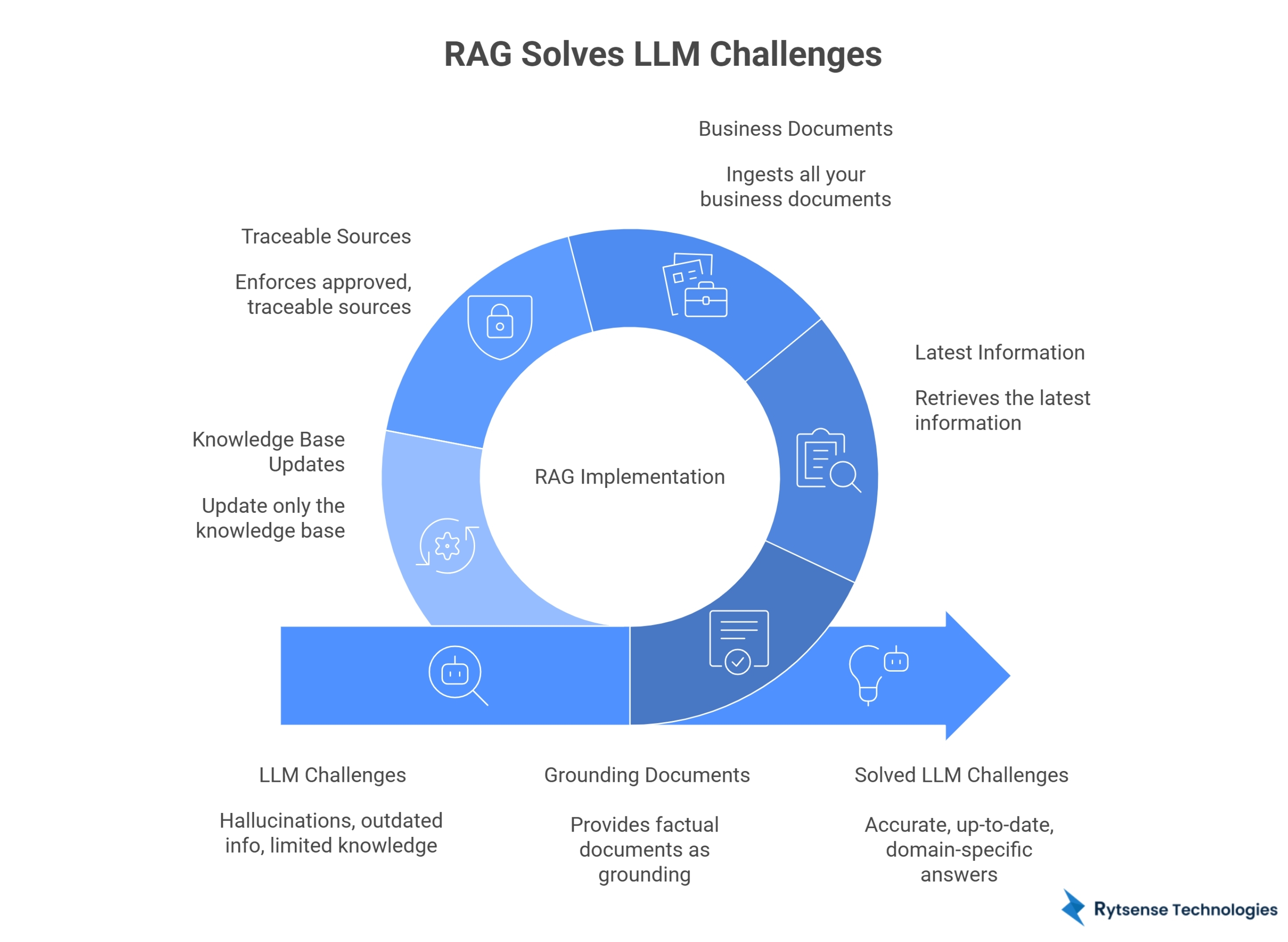

RAG solves these by:

- Retrieving relevant information from trusted sources (databases, documents, PDFs, intranet systems)

- Feeding that information into the LLM

- Generating accurate answers based on the retrieved content

RAG makes AI grounded, reliable, factual, domain-aware, and enterprise-ready.

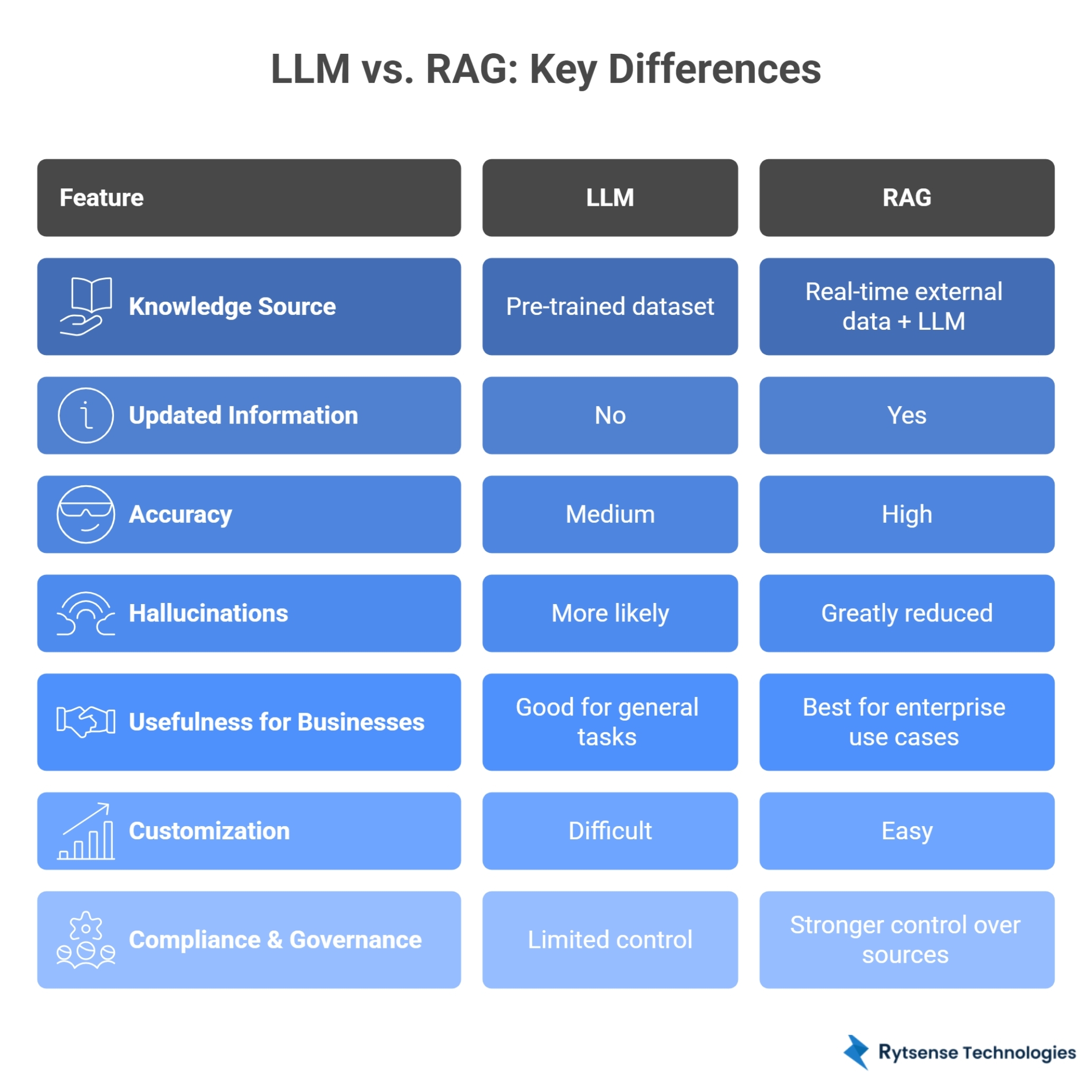

3. RAG vs LLM: The Key Differences

While LLMs and RAG work together, they serve very different purposes. The following breakdown highlights why RAG is becoming the standard for enterprise AI development.

FeatureLLMRAGKnowledge SourcePre-trained datasetReal-time external data + LLMUpdated InformationNoYesAccuracyMediumHighHallucinationsMore likelyGreatly reducedBusiness UtilityGood for general tasksIdeal for enterprise useCustomizationDifficultEasyComplianceLimitedStrong

The takeaway: If your AI system needs accuracy, context, recency, compliance, or private data, RAG is essential. If you need general understanding or creative generation, LLM alone is enough.

1. Knowledge Source

LLM → Pre-trained Dataset

An LLM learns from a massive dataset during training—books, websites, articles, code, documentation, research papers, etc. Once training ends, its knowledge becomes static. It cannot learn new company data, access private files, or retrieve live information. It answers based on what it remembers from training.

RAG → Real-time External Data + LLM

RAG adds an external knowledge layer where the AI can search:

- PDFs

- Internal documents

- Product manuals

- Policies

- CRM data

- Knowledge bases

- Vector databases

2. Updated Information

LLM → No Updates

An LLM’s knowledge is frozen at its training cutoff. If your policies, product pricing, or business strategies change, the LLM won’t know. This can cause outdated responses, inaccurate instructions, or wrong business information.

RAG → Always Up to Date

Because RAG retrieves data at the moment of answering, the AI stays fully updated. This is essential for enterprises with evolving operations, compliance-driven industries, and customer support knowledge bases.

3. Accuracy

LLM → Medium Accuracy

LLMs try their best to “guess” answers when they don’t have exact information. They may sound confident, but they can still be wrong. LLMs work well for creative content, general knowledge, and idea generation.

RAG → High Accuracy

RAG grounds the answer in real documents, which ensures factual correctness, consistency, and domain-specific accuracy. Enterprises use RAG when accuracy is mission-critical, such as in Legal AI, Healthcare AI, or Banking/fintech AI.

4. Hallucinations

LLM → More Likely

LLMs hallucinate because they rely on probability-based predictions. When unsure, they “fill in the blanks.” This is risky for customer support, internal operations, or financial data interpretation.

RAG → Greatly Reduced

Because RAG forces the model to reference real information, hallucinations drop significantly. AI responses now cite the actual source and stay consistent with your documents.

5. Usefulness for Businesses

LLM → Good for General Tasks

LLMs are excellent for content generation, coding help, summaries, and marketing content—tasks that do not require access to private data.

RAG → Best for Enterprise Use Cases

RAG is designed for businesses that need AI to work with their own knowledge. RAG-powered use cases include AI assistants trained on internal docs, automated policy-based decision making, and technical support chatbots.

6. Customization

LLM → Difficult

Customizing an LLM’s internal knowledge requires retraining or fine-tuning, which involves large datasets, expensive compute, and significant engineering expertise.

RAG → Easy

Businesses can customize RAG simply by uploading documents or adding new files to a knowledge base. No retraining required, making it ideal for fast deployment and scalability.

7. Compliance & Governance

LLM → Limited Control

LLMs cannot:

- Guarantee the source of an answer

- Restrict themselves to only “approved” information

- Prove where a specific answer came from

This is a compliance issue in industries like:

- Finance

- Insurance

- Healthcare

- Government

- Legal

RAG → Strong Governance & Traceability

RAG allows businesses to:

- Control exactly which documents AI can use

- Track which sources were referenced

- Enforce policy restrictions

- Maintain data security

- Support audit trails

4. When Should Businesses Use RAG vs LLM?

When deciding whether to use an LLM or an RAG system, it's important to understand what each one is designed to do. Both approaches solve different problems in AI, and the right choice depends on how much accuracy, context, and real-time information your task requires.

✅ Use LLM When You Need:

- Creative content

- General knowledge Q&A

- Idea generation

- Code generation

- Writing assistance

- Summaries of generic topics

✅ Use RAG When You Need:

- AI trained on your private company documents

- Compliance-friendly responses

- Accurate product/service information

- Workflow automation

- AI customer support using internal FAQs

- AI decision-making based on real company data

A simple rule-of-thumb:👉 LLM for creativity👉 RAG for accuracy

5. Real Use Cases for Startups and Enterprises

RAG-powered systems are increasingly becoming the foundation of modern AI applications. While both startups and large enterprises use RAG, their goals, scale, and requirements differ, which leads to very different real-world use cases. Below is a deeper look at how each type of organization benefits.

Startups Use RAG For:

1. Building AI-Powered SaaS ToolsStartups often create AI-powered software products that must answer user questions accurately or generate insights using live data. RAG helps these SaaS tools:Reference real documentsDeliver correct informationReduce hallucinationsOffer enterprise-grade reliability2. Automating Customer SupportRAG enables startups to build chatbots and support assistants that:Pull answers directly from internal FAQsRefer to updated policiesProvide consistent responses3. Adding AI Features into Mobile AppsMobile apps increasingly include:Smart searchPersonalized recommendationsAutomated guidanceOn-device knowledge assistants4. Speeding Up MVP DevelopmentStartups must ship prototypes fast. With RAG:They don’t need to train custom modelsAI becomes accurate simply by uploading documentsNew features launch quickly5. Knowledge-Based ChatbotsThese chatbots can:Answer technical product questionsPull exact instructions from manualsEducate new users or onboard customers6. Product Recommendation Systems Using Real DataStartups can build intelligent recommendation engines that:Analyze user preferencesRetrieve similar products or contentImprove conversions

Enterprises Use RAG For:

1. AI Assistants Trained on Internal DocumentsLarge companies have thousands of:SOPsPoliciesReportsTechnical manualsKnowledge basesCompliance documents2. Supply Chain OptimizationRAG supports operational decision-making by retrieving:Inventory recordsHistorical performance dataVendor agreementsLogistics documentation3. Policy-Driven AI GovernanceEnterprises must ensure AI:Uses approved data sourcesProvides compliant responsesRespects privacy rules4. Fraud Detection and Compliance SystemsBy referencing:Transaction logsCompliance rulesRisk assessmentsCase histories5. AI-Powered Analytics DashboardsInstead of static dashboards, RAG enables:Natural language queryingContext-aware insightsSource-supported explanations6. Contract Analysis and Legal AI SystemsLarge enterprises handle complex contracts daily. RAG systems can:Retrieve relevant clausesCompare versionsSummarize termsFlag risksEnsure compliance

Both startups and enterprises benefit from custom AI development services that combine RAG, LLMs, and machine learning to build scalable AI systems.

6. How RAG Improves AI Accuracy, Trust, and Decision-Making

RAG is becoming essential in enterprise AI systems because it improves:

- Accuracy: LLMs guess answers when they don't know; RAG feeds them real facts, eliminating guesswork.

- Transparency: Businesses know exactly which documents the AI used.

- Governance: Organizations can control the data sources allowed.

- Consistency: The AI gives the same answer repeatedly because it references official documents.

- Data Privacy: Your internal data stays inside your secure environment.

- Better Decision-Making: When AI has access to the latest reports, analytics, and documents, the decisions it suggests are: Data-backedExplainableTraceable

This is why RAG is widely used in healthcare, finance, insurance, real estate, and enterprisesoftware development services.

7. RAG and LLM in AI Development Services

Modern AI development companies integrate RAG and LLM to build advanced AI applications such as:

- Intelligent chatbots

- AI-powered search systems

- Enterprise data assistants

- Automated analytics tools

- Knowledge-based enterprise platforms

- Product recommendation engines

- AI-driven customer support

A typical AI development process includes:

1Data collection2Model training3RAG pipeline setup4Vector database integration5AI application development6Testing & optimization7Deployment

The combination of LLMs + RAG +machine learning + neural networks + natural language processing creates AI solutions that are powerful, scalable, and enterprise-ready.

8. Challenges of LLMs and How RAG Solves Them

LLM Challenge 1: HallucinationsLLMs sometimes generate confident but wrong answers. RAG Solution: Provides factual documents as grounding, forcing the model to reference real information.LLM Challenge 2: Outdated InformationLLMs do not know new events or updated product details. RAG Solution: Retrieves the latest information every time, keeping responses current.LLM Challenge 3: Limited Domain KnowledgeLLMs are not experts in your company’s specific processes. RAG Solution: Ingests all your business documents (SOPs, manuals) to provide context-aware expertise.LLM Challenge 4: Compliance & Security RisksLLMs cannot guarantee source authenticity or private data safety. RAG Solution: Enforces approved, traceable sources and keeps data within your secure environment.LLM Challenge 5: Scaling IssuesRetraining large models is expensive and technically challenging. RAG Solution: Update only the knowledge base (uploading new docs)—not the whole model.

9. Future of AI: Will RAG Replace Traditional LLMs?

RAG will not replace LLMs. Instead, RAG will become the default way businesses use LLMs.

Why?

Because companies need AI systems that:

- Use their private data

- Always stay updated

- Avoid hallucinations

- Improve decision intelligence

- Integrate into existing systems

- Support enterprise workflows

- Provide consistent, trustworthy outputs

By 2026 and beyond, experts predict that 90% ofenterprise AIapplications will use a RAG pipeline to enhance LLM capabilities. This shift will push more companies to adopt:

- Custom AI development

- AI integration into existing systems

- Predictive analytics

- AI-driven business intelligence

- Multi-agent AI systems

- Generative AI applications

- AI-powered automation

10. Conclusion

LLMs are powerful generative AI systems that understand and generate text. RAG enhances these models by giving them real-time access to your business knowledge, ensuring accuracy, context, and governance.

For startups, RAG makes product development faster and cheaper. For enterprises, it provides reliability, compliance, and trust—key factors in scaling AI systems. If you want to build AI-powered applications that:

- Use your internal data

- Deliver accurate, real-time answers

- Support decision-making

- Improve automation

- Reduce operational cost

- Enable digital transformation

…then RAG-powered LLM solutions are the smartest choice.

Meet the Author

Co-Founder, Rytsense Technologies

Karthik is the Co-Founder of Rytsense Technologies, where he leads cutting-edge projects at the intersection of Data Science and Generative AI. With nearly a decade of hands-on experience in data-driven innovation, he has helped businesses unlock value from complex data through advanced analytics, machine learning, and AI-powered solutions. Currently, his focus is on building next-generation Generative AI applications that are reshaping the way enterprises operate and scale. When not architecting AI systems, Karthik explores the evolving future of technology, where creativity meets intelligence.