Key Takeaways

Multimodal generative AI can understand and generate text, images, audio, video, and code together.

Examples like ChatGPT Vision, Google Gemini, Microsoft Copilot, and Sora show real-world multimodal capabilities.

It provides deeper context and more accurate outputs than single-modality AI models.

Businesses benefit through automation, faster decision-making, defect detection, document analysis, and enhanced customer experiences.

Startups use multimodal AI to build innovative products faster with smaller teams.

Enterprises rely on multimodal AI to automate complex workflows and improve operational efficiency.

Implementing multimodal AI requires handling data complexity, integration challenges, and high compute needs.

AI development services help companies build custom multimodal solutions, integrate models, and deploy them at scale.

The future of multimodal AI includes autonomous agents, real-time AI understanding, and advanced generative 3D capabilities.

What Is an Example of Multimodal Generative AI?

What Is an Example of Multimodal Generative AI? (With Simple Explanation + Real Use Cases)

Multimodal generative AI means an AI model that can understand and generate multiple types of data at once such as text, images, audio, video, and code.

A simple example:

👉 ChatGPT (Vision + Audio + Text) — It can see an image, understand it, and respond in text or speech.

Now let's unpack what multimodal generative AI really is, how it works, why businesses from startups to large enterprises are rapidly adopting it, and how AI development services enable companies to build custom multimodal apps tailored to their workflows.

1. What Is Multimodal Generative AI?

Multimodal generative AI is an advanced form of artificial intelligence that can process, interpret, and generate outputs from different data types together text, speech, images, videos, sensor data, and even code. This is foundational for creating custom AI solutions, enterprise automation, and next-gen AI applications.

Unlike traditional AI models trained for a single medium (for example, just NLP or just computer vision), multimodal intelligence combines multiple inputs to understand context more deeply. Companies building digital products increasingly rely on artificial intelligence development services to integrate these capabilities.

This allows businesses to build custom AI solutions that mimic human perception—seeing, reading, listening, analyzing, and responding seamlessly.

2. A Simple, Clear Example of Multimodal Generative AI (in More Detail)

➡️ Example: ChatGPT with Vision + Audio

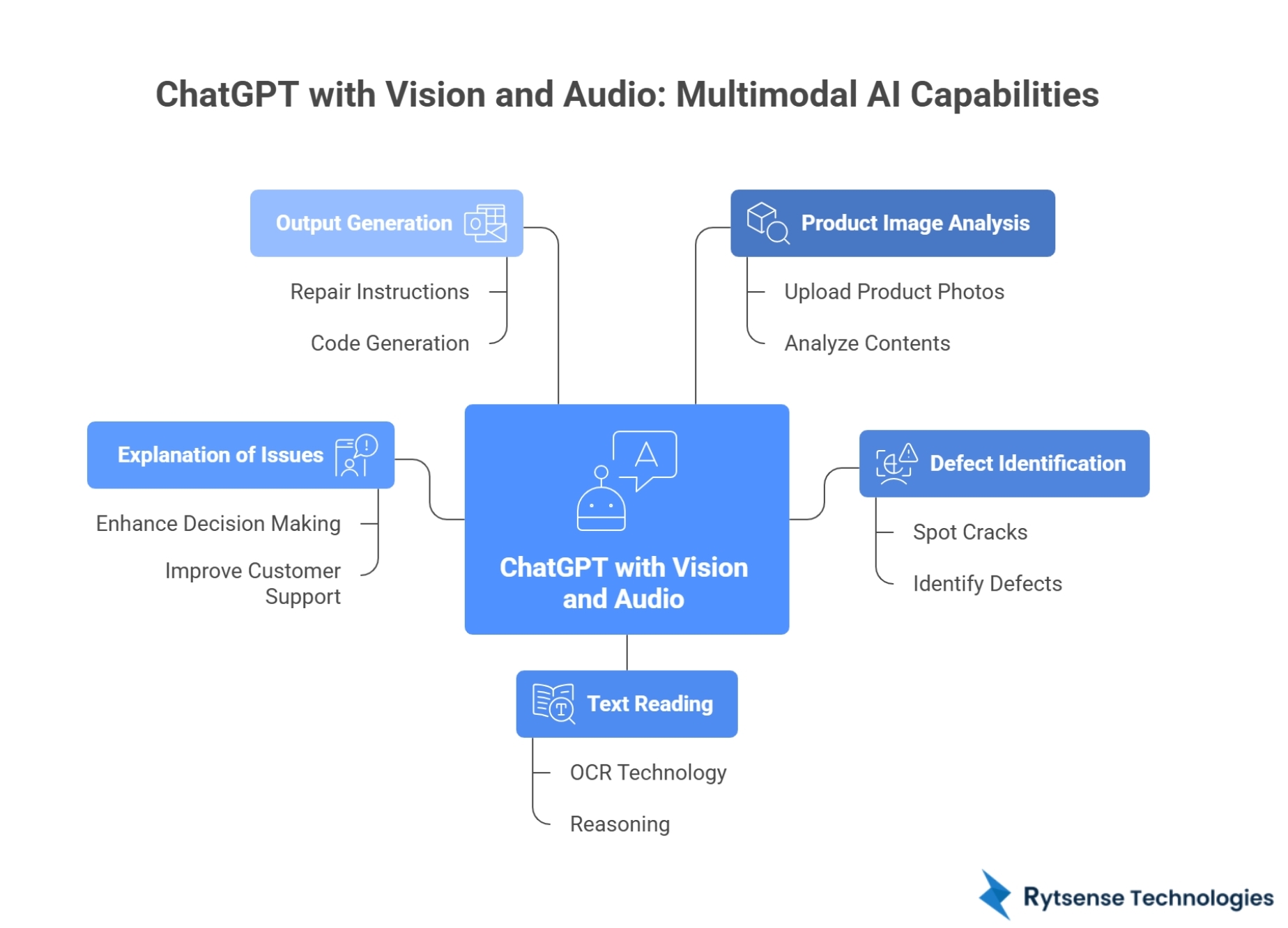

One of the most practical examples of multimodal generative AI is ChatGPT with Vision and Audio. This model showcases the true power of multimodal AI development, allowing businesses to integrate visual, text, and audio capabilities into their workflows.

Unlike traditional AI models that only understand text, ChatGPT can now interpret images, spoken language, documents, and screenshots — and respond intelligently.

Here’s what it can do in detail:

1. Look at a product image

Businesses using custom AI development or AI app development services can upload product photos, UI designs, receipts, diagrams, damaged items, or machinery parts. The model visually analyzes the contents just like a human.

2. Identify defects or issues

ChatGPT can spot cracks, dents, incorrect assembly, broken components, UI issues, and manufacturing defects—capabilities useful across manufacturing AI, quality automation, and enterprise AI systems.

3. Read text inside the image

Including labels, invoice totals, handwritten notes, specifications, and error codes using OCR + reasoning. This is used heavily in AI-powered automation workflows.

It combines OCR + reasoning, meaning it not only extracts text but also understands it.

4. Explain what’s wrong and why

It delivers insights much like a human expert—enhancing AI-driven decision making, customer support, and defect reporting, such as:

- “The screen is cracked on the left side near the bezel.”

- “The circuit board is missing a capacitor.”

- “The UI button overlaps with the input field, causing usability issues.”

5. Generate relevant outputs

From repair instructions to code generation, documentation, audio responses, and reports—this makes multimodal models essential for AI development companies building enterprise-grade tools.

Other Popular Multimodal Generative AI Examples (with brief details)

1. Google Gemini (text + code + images + video)

Unified multimodal model ideal for enterprise AI integration and machine learning applications. Gemini is built from the ground up as a multimodal model. It can analyze videos, interpret images, write code, summarize documents, solve math problems with diagrams, and understand audio.

Why it’s multimodal: Gemini doesn’t switch between separate models, it uses one unified system to understand all data types together.

2. Microsoft Copilot (vision + text + code)

Copilot integrates multimodal AI across Microsoft 365 and GitHub. It reads images, documents, charts, and screenshots, then generates text, explanations, or code.

Example: Upload a screenshot of an error → Copilot explains the cause and writes the fix.

3. DALL·E 3 (text → image generation)

DALL·E 3 turns written instructions into highly detailed images. It understands complex descriptions, composition, style, and context. Transforms text into visuals — widely used in AI-powered creative solutions.

Why it’s multimodal: It uses language understanding to produce visual content, bridging two modalities — text and images.

4. Sora by OpenAI (text → video generation)

Sora creates realistic videos from text prompts. It models motion, physics, lighting, and environment behavior.

Why it’s multimodal: It transforms natural language inputs into dynamic visual sequences, combining language reasoning with video generation.

Why These Models Matter

These AI systems mark the beginning of a new era where AI becomes:

- More intuitive

- More human-like

- More useful in real business applications

- Able to understand the world using multiple senses

This is why multimodal generative AI is considered a major breakthrough in artificial intelligence and enterprise automation.

3. How Multimodal AI Works (Explained Simply)

Multimodal AI learns from text, images, audio, and video together — similar to how humans learn.

The child forms a combined mental model. Multimodal AI does the same through neural networks that learn joint representations across different data types.

Key components:

- Large Language Models (LLMs)

- Computer Vision Models

- Audio/Speech Models

- Fusion Models that combine it all

- Training on multi-format datasets

These are the foundation for modern AI and machine learning development services, enabling smarter business applications.

4. Why Multimodal Generative AI Is a Game-Changer for Businesses

Modern enterprises want AI that can read documents, analyze dashboards, interpret images from CCTV/IoT, listen to customer calls, automate processes, and integrate data sources. This requires multimodal intelligence combined with AI development services and custom AI integration.

| Business Advantage |

|---|

| Faster decision-making |

| Reduced human dependency |

| Automated real-time analysis |

| Higher accuracy with multi-source inputs |

| Better customer experiences |

This is why many companies now prefer partnering with an expert AI development company in the USA or globally.

5. Real Use Cases Across Industries (2025 Trends)

Healthcare

- AI reads X-rays + doctor notes → generates diagnosis summary

- Multimodal chatbots assist patients using voice + text

E-commerce

- AI analyzes product images + descriptions → auto-generates listings

- Personalized recommendations using visual + behavioral data

Manufacturing

- Defect detection using images + sensor data

- Automated maintenance predictions

Finance

- AI reads financial reports + trend graphs → generates investment insights

- Voice + text fraud detection

Real Estate

- Property images + location data + text descriptions → valuation models

- Virtual staging via generative AI

Education

- AI tutors use text + voice + images for multimodal learning

Automotive

- Autonomous vehicles rely heavily on multimodal AI (camera + lidar + radar)

Software Development

- AI reads UI screenshots → generates code

- Developers use multimodal copilots to build apps faster

These industries rely on generative AI development companies and custom AI solutions to scale rapidly.

6. Benefits for Startups vs Enterprises

Startups

- 🚀 Build innovative apps faster

- 💰 Reduce development cost using pre-trained multimodal models

- 👥 Launch with smaller teams using AI app development services

Enterprises

- ⚙️ Automate complex workflows

- 📊 Improve decision-making

- 🤖 Enhance CX with multimodal assistants

- 📈 Increase operational efficiency

Both benefit from partnering with an AI development company for seamless deployment.

7. Core Technologies Behind Multimodal Generative AI

Transformers, diffusion models, VLMs, GANs, reinforcement learning, and large language models all essential technologies used in AI and machine learning development services.

These AI technologies work together to analyze raw data and generate meaningful outputs.

8. Key Challenges Businesses Face With Multimodal AI

Even though multimodal AI is powerful, companies often struggle with:

- ⚠️ High computational cost

- ⚠️ Training data complexity

- ⚠️ Model alignment issues

- ⚠️ Integration with existing systems

- ⚠️ Ensuring data privacy

- ⚠️ Maintaining accuracy across modalities

This is why organizations partner with an AI development company to build secure, scalable AI systems.

9. How AI Development Services Help You Build a Multimodal Solution

A skilled AI development partner helps businesses:

- Identify the right AI model

- Collect multimodal datasets

- Train and fine-tune the model

- Integrate AI into existing software systems

- Build AI apps for web/mobile

- Deploy and maintain the solution

Custom AI development ensures the system aligns with your business goals—whether it's predictive analytics, workflow automation, or generative AI solutions.

10. Who Should Use Multimodal Generative AI?

This technology is ideal for:

Anyone handling text, images, audio, video, or documents can benefit from custom AI solutions.

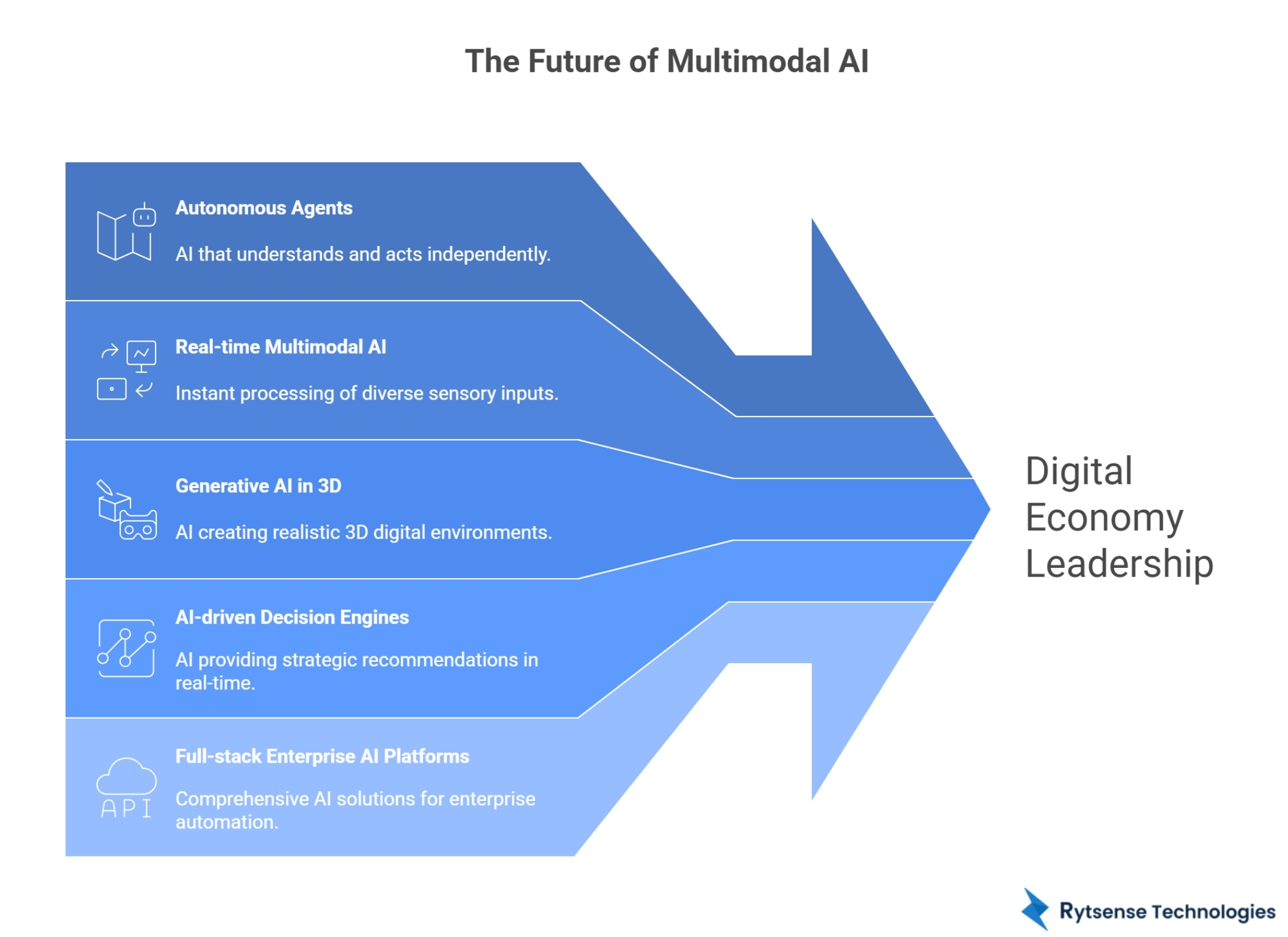

11. Future Trends: What Comes After Multimodal AI?

2025 and beyond will accelerate:

Autonomous agents

AI that not only understands but takes action.

Real-time multimodal AI

Instant understanding of video, speech, images, and data streams.

Generative AI in 3D

AI-generated digital twins, simulations, and environments.

AI-driven decision engines

Real-time predictions + strategic recommendations.

Full-stack enterprise AI platforms

For complete workflow automation.

Companies investing in multimodal AI today will lead the digital economy tomorrow.

12. Conclusion

Multimodal generative AI is transforming how businesses adopt artificial intelligence.

From ChatGPT with Vision to enterprise multimodal assistants, AI can now see, listen, read, interpret, and generate making it essential for digital transformation. Startups innovate faster. Enterprises automate smarter.

And both succeed faster with AI development services and custom multimodal AI applications.

Meet the Author

Co-Founder, Rytsense Technologies

Karthik is the Co-Founder of Rytsense Technologies, where he leads cutting-edge projects at the intersection of Data Science and Generative AI. With nearly a decade of hands-on experience in data-driven innovation, he has helped businesses unlock value from complex data through advanced analytics, machine learning, and AI-powered solutions. Currently, his focus is on building next-generation Generative AI applications that are reshaping the way enterprises operate and scale. When not architecting AI systems, Karthik explores the evolving future of technology, where creativity meets intelligence.